Datavant Life Sciences’ AI Platform: Scientific Rigor at Scale

Life Sciences organizations face growing pressure to generate real-world evidence (RWE) faster and at greater scale—for internal decision-making, publication, and external stakeholders, including regulators and payers.

Speed is now non-negotiable, but scientific rigor cannot be sacrificed.

As AI becomes embedded in evidence generation workflows, the bottleneck is no longer compute—it is trust in the underlying scientific methods, decision-making throughout the process of transforming data to evidence, and an efficient path to execution with appropriate points for human review. Now more than ever, it is critical to ensure that RWE generation, even when enabled by AI, is supported by the subject-matter expertise required to ensure valid inference, and responsible preparation and execution.

Datavant Life Sciences is addressing this challenge with an agentic platform purpose-built for responsible RWE generation at scale. We are developing and deploying agents to call existing software tools across our existing Datavant Connect and Aetion Evidence Platforms, operating within human-supervised workflows; throughout the data-to-evidence continuum, scientists retain decision authority at defined review points. This agentic data discovery, data utility, and evidence generation platform is being designed and built according to three core operating principles that embed transparency, rigor, and RWE best practices into every stage:

- AI Foundation & Stepwise Agent Deployment.

- Scientifically-Informed Evals.

- Scientifically-Vetted Workflows.

In this blog, we outline how Datavant Life Sciences is approaching the development and deployment of agentic AI for RWE generation.

AI Foundation & Stepwise Agent Deployment

Before scaling agentic workflows, we are prioritizing the integration of validated training models, the build of governed pipelines, and development of scientific evaluation frameworks. Frontier LLMs serve as the core of our platform, constrained and operationalized through structured data, validated tools, and scientific evaluation layers. Hallucinations are mitigated through precise task scoping, clear instruction, and a retrieval-augmented generation (RAG) framework that contextualizes agents using years of scientific inputs, including the entire ecosystem of real-world data (RWD) sources, hundreds of deidentified/redacted study protocols, and prior analyses. Rather than relying on fixed, pre-programmed rules, our agents navigate the complexity inherent in RWE generation while remaining grounded in human oversight and validated scientific methods. In parallel, we are building the governance and validation infrastructure required for responsible use in life sciences, with a focus on auditability, regulatory compliance, and structured model performance testing.

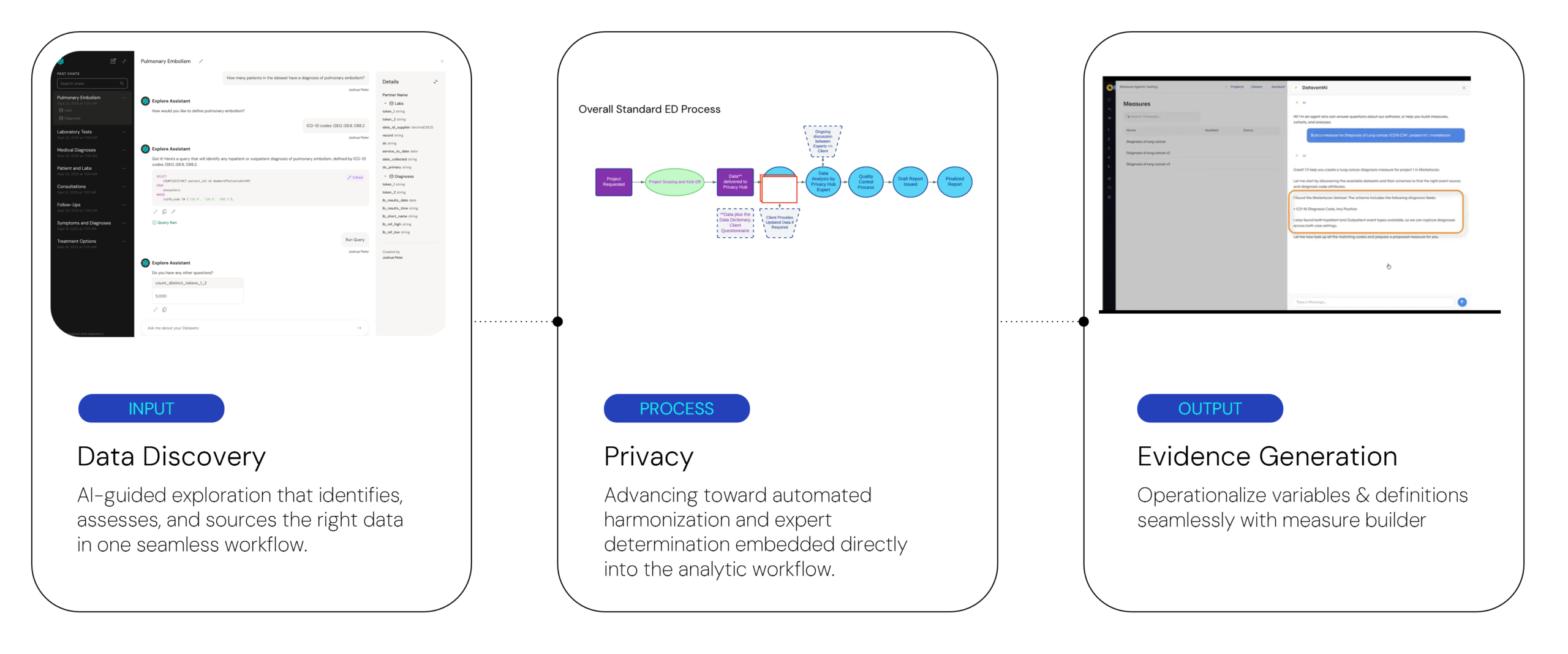

Consistent with our commitment to scientific rigor, agents are built step-wise across the data-to-evidence continuum. The approach starts with focused use cases—data exploration, privacy assessment, and measure creation, each of which serves as the foundational component of data discovery, data utility, and insights/evidence generation, respectively—and advances deliberately toward more complex workflows such as end-to-end study execution. Each agent operates within its own RAG context informed by our accumulated RWD & RWE experience. Each capability is introduced with oversight processes to review outputs, track performance, and enforce standards.

By starting with the core components, followed by a phased rollout across the evidence generation lifecycle, we are creating a continuous feedback loop for optimization and learning. This phasing allows customers to provide direct feedback on Datavant’s agentic platform build while also supporting fine-tuning of the agent evaluation framework, ultimately improving how agents perform to meet customer needs.

Agents operate across the data-to-evidence continuum, linking data discovery, privacy, and evidence generation within a structured and governed workflow.

Scientifically-Informed Evals

Scientific evals serve as the control system of our platform. Without them, agentic AI introduces unacceptable risk for us and our users. Datavant’s proprietary evaluation systems are embedded across all workflows and aligned with our best-practice scientific standards and established frameworks. Extensive experience across the RWD ecosystem underpins this framework, including a team of more than 50 epidemiologists who have implemented hundreds of study protocols over the past decade using our technology. This expertise is complemented by deep capabilities in data sourcing and fitness-for-purpose assessment, regulatory strategy, and large-scale data privacy operations. These capabilities support Datavant Life Sciences in developing and deploying agents that maintain the same level of scientific and operational rigor.

Agents must meet specific thresholds before production deployment. For example, evidence generation agents are benchmarked against historical cohort definitions to verify correct application of study eligibility criteria to complex RWD. Agents are also evaluated on their ability to identify fit-for-purpose data sources, correctly implement study algorithms, and appropriately control for confounding to achieve exchangeable patient populations. Our deep bench of epidemiologists and data scientists is building and refining these evaluations as we scale. Unlike traditional quality assurance (QA), our evals are scientific checks that test whether results would withstand scrutiny from regulators, clinicians, and statisticians. Agents iterate in a closed loop until pre-specified performance thresholds are met, and only then are advanced into a per-project production workflow.

Scientifically-Vetted Workflows

Our agents do not generate RWE in a vacuum. Instead, they operate the same validated software tools within the Datavant Connect and Aetion Evidence platforms, while following the quality control and assurance workflows our teams have utilized for years, with humans incorporated at key decision and review points along the data-to-evidence continuum. Built on over a decade of scientific experience and leadership, this knowledge base serves as the foundation for how agents invoke and apply these tools.

Extending this approach across the platform, we will support the full RWE lifecycle, from data discovery and privacy assessment to insights and evidence generation. Agents are deployed across our existing qualified tools, which serve as the standard for both our customers and internal scientific teams. By incorporating agents into the human-supervised workflows, scientists gain time to focus further on study design, data fit-for-purpose assessment, interpretation, and evidence synthesis with the goal of augmenting rather than replacing non-agentic RWE workflows.

Humans remain in the loop at defined decision points across the data-to-evidence continuum. These decision points may vary by stakeholder (e.g. regulator, internal decision-making) and support modular workflows across customer needs. For example, following data source identification, our data insights team reviews agent recommendations against prior experience and benchmarks. Following cohort generation, scientists review attrition tables to confirm that eligibility criteria are applied as expected given the indication and data source. Post-deployment, agent performance is monitored continuously, with successful configurations scaled across additional workflows over time. Taken together, these practices enable production-grade RWE that is reproducible, auditable, and decision-ready.

What AI in RWE Enables

By accelerating key steps across the data to evidence continuum, Datavant’s platform enables more efficient and timely distribution of evidence across functional teams within Life Science organizations. This includes:

- Data discovery: Faster identification of fit-for-purpose data sources, reducing a common bottleneck and accelerating time to insight and evidence. Explore Assistant enables interactive assessment of individual and linked datasets, allowing teams to evaluate feasibility earlier in the process.

- Privacy assessment of linked or stacked data sources: Automation that reduces delays between data access and the ability to use the data.

- Evidence generation: Agent-driven measure development, cohort generation, and analysis that compresses study implementation timelines from months to weeks. This includes Measures Builder, where users define reusable study algorithms through guided natural language workflows.

Explore Assistant converts natural language questions into executable queries, enabling real-time exploration of cohort definitions and dataset characteristics.

Embedding rigorous and trusted agents throughout the data-to-evidence continuum will result in RWE practitioners spending less time on execution and more time on interpretation. The focus shifts to what the evidence means, and what decisions it can inform across the clinical development lifecycle and commercial strategy.

Why Datavant’s Approach Matters

Our principled approach ensures that AI-enabled evidence generation strengthens, rather than undermines, the scientific rigor that supports credible RWE. Without this foundation and scientific expertise, the use of AI in evidence generation risks eroding the trust built over the past decade around the reliability, transparency, and validity of RWD/E.

In a series of subsequent blog posts, we will go a level deeper into what agentic AI means at each phase of the data-to-evidence continuum and how Datavant Life Sciences is taking a task-oriented approach to building, evaluating, and deploying agents across our existing workflows.

To learn more about how Datavant is enabling responsible, AI-driven RWE generation at every stage, connect with our team here.